Your new post is loading...

Your new post is loading...

The momentous transformation putting data managers and their systems at the helm of modern enterprises has only begun. Industry leaders and experts agree that it's critical for data managers and team leaders to design today's data architectures to meet the demands of a digital economy, from cloud to real-time streaming to AI.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

Kinetica, the real-time GPU-accelerated database for analytics and generative AI, unveiled at NVIDIA GTC its real-time vector similarity search engine that can ingest vector embeddings 5X faster than the previous market leader, based on the popular VectorDBBench benchmark.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

Vercel’s Next.js has come a long way since its initial release in 2016. What would become the most popular React framework (according to their own website, at least), started as a simple solution for handling routing, SEO optimisation and server-side-rendering within React applications; all of which were lacking in React at the time.

Fast forward several years, and Next has grown into an incredibly performant, well-supported and fast-developing framework, with millions of users worldwide.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

E4S, the open source Extreme-Scale Scientific Software Stack for HPC-AI scientific applications, now incorporates AI/ML libraries and expands GPU support to include the Nvidia Grace and Grace Hopper architectures.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

Much like organizations in every sector, federal government agencies run on data. The universal challenge of dealing with massive amounts of data every day, while leveraging the right data at the right time, is also very much front and center for government agencies. Amid the growth of computing platforms, mobile devices, and cloud environments, government agencies have steadily added new systems and capabilities, but they've often integrated those systems on an ad hoc basis—as point solutions to address specific needs.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

In this contributed article, Tom Scott, CEO of Streambased, outlines the path event streaming systems have taken to arrive at the point where they must adopt analytical use cases and looks at some possible futures in this area.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

There are three facets of developer productivity: speed, ease, and quality. In this column, we explore software quality, what has made it so difficult to define and measure, and theorize that there are four types of quality which influence each other.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

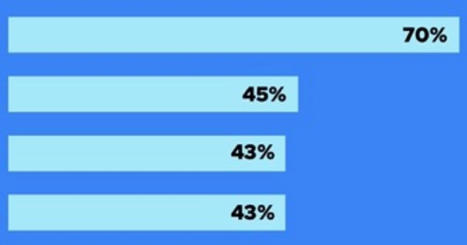

Forrester sees three possible futures for the low-code market: it will either keep going on its current trajectory, be accelerated by AI, or be slowed by AI as developers do more coding tasks with an AI assistant and don’t need the productivity gains of low-code as much.

Forrester says the first option — that low-code continues on its current growth trend — is the most likely scenario at the moment.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

Project IDX shines with a VS Code look and feel, GitHub integration, workspace sharing, and AI-powered coding assistance. But it’s currently experimental, and available only in a limited preview.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

|

What happens when Department of Energy (DOE) researchers join forces with chemists and biologists at the National Cancer Institute (NCI). They use the most advanced high-performance computers to study cancer at the molecular, cellular and population levels.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

In today’s data-driven world, data observability is a critical concept for organizations aiming to effectively manage their data. Simply put, it means having the ability to constantly monitor and understand the status of your data. This includes tracking where it comes from, where it’s going, whether it’s on time and in the right quantity, its quality, and any recent changes in behavior. Data observability helps answer essential questions about your data and ensures it remains reliable. In this article, we’ll delve into what data observability is, why it matters, the advantages it offers, and when it’s the right time to adopt it.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

CNCF published the results of its latest microsurvey report on cloud-native FinOps and cloud financial management (CFM). Kubernetes has driven cloud spending up for 49% of respondents, while 28% stated their costs remain unchanged and 24% saved after migrating to Kubernetes. Respondents listed overprovisioning, lack of awareness and responsibility, and sprawl as the main factors for overspending.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

To control compute – to squeeze or open the spigot of processing power – is to control AI. In doing so, AI can be steered toward beneficial results while avoiding, or punishing, bad ones. That’s the argument put forward in a 78-page white paper issued by 15 research centers and universities in the U.S., Canada and the UK – along with one company: OpenAI, whose launch of ChatGPT in November 2022 ignited the generative AI craze and caused, among other impacts, growing concerns about uncontrolled AI.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

Imagine a world where the hum of engines and the smell of exhaust are things of the past. That's the future electric vehicles (EVs) promise us. As it To accelerate EV adoption, the transportation sector needs to leverage a new type of fuel altogether: data.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

The Linux Foundation's recent survey-based insights report 'Maintainer Perspectives on Open Source Software Security' is available now. It was based on a survey of OSS maintainers and core contributors, to understand perspectives on OSS security and the uptake and adoption of security best practices.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

This article describes how to implement a Raft Server consensus module in C++20 without using any additional libraries. The narrative is divided into three main sections:

A comprehensive overview of the Raft algorithm A detailed account of the Raft Server's development A description of a custom coroutine-based network library

The implementation makes use of the robust capabilities of C++20, particularly coroutines, to present an effective and modern methodology for building a critical component of distributed systems. This exposition not only demonstrates the practical application and benefits of C++20 coroutines in sophisticated programming environments, but it also provides an in-depth exploration of the challenges and resolutions encountered while building a consensus module from the ground up, such as Raft Server. The Raft Server and network library repositories, miniraft-cpp and coroio, are available for further exploration and practical applications.

@tonyshan #techinnovation https://bit.ly/tonyshan https://bit.ly/tonyshan_X

Via Tony Shan

|

Your new post is loading...

Your new post is loading...